To demonstrate this, Ginger ran a simple but revealing experiment using a short sample of his own fully human written prose. He submitted the paragraph to several popular AI detection platforms and received dramatically conflicting results. Some declared the text unquestionably human, while others insisted it was AI generated. If tools cannot reliably identify writing that is one hundred percent human, what does that mean for authors who may one day be asked to defend their integrity? This experiment raises serious concerns about false accusations, misplaced confidence in flawed technology, and what it will take to protect genuine human authors in an increasingly AI saturated landscape.

Do AI Detection Platforms Actually Work?

For most of publishing history, an author accused of plagiarism only had to defend against whether they copied another writer’s work, and that could usually be determined by comparing the two texts. With the rise of AI, however, authors are now facing a different challenge: defending that they wrote their own words without artificial assistance. Proving that is far less straightforward. Despite a growing number of tools that claim they can detect AI generated writing, their conclusions are often inconsistent and highly subjective.

Recently, I was requested to write a blurb for a fantasy book by an author who made it very clear that I wasn’t to use AI to help with the writing in any way. He’d be checking with an AI detector to make sure.

(Which, to be fair, I consider a completely reasonable request. The whole purpose of hiring a human is to receive human-written prose.)

Now, I wasn’t concerned, since I don’t use AI to write my blurbs. It’s not that AI can’t produce something that seems like an effective book blurb, but I have always found the different AI platforms incapable of understanding the context of what makes a book sound really compelling. Their blurbs always come across as kind of generic.

As I wrote in this article, the most important part of a compelling blurb is the “central question” that defines your protagonist’s conflict, and AI doesn’t really understand how to get to the bottom of that question.

Often the author doesn’t either, because they’re so close to their work (which is why I always recommend getting somebody else to write your blurb!)

You generally have to read a manuscript with fresh eyes to be able to “read between the lines” and figure out the core message. It’s something that the author themselves might only recognize after they’ve completed writing the book!

But given that AI is unable to write an effective blurb (and I doubt it ever will) I started to wonder just how effective these so-called AI detectors are. It led me to conduct an experiment that produced some very interesting results.

I decided to test some popular AI detection platforms with a section of prose that was written completely independently of AI. I sat down with a 3-minute timer and hammered out a paragraph about something I’d been researching recently: the history of the DeHavilland Mosquito bomber (I know, I know, I’m a history nerd.)

Here’s what I wrote. Don’t judge it too harshly, because it took three minutes and I didn’t expect to ever publish it:

My mother was adopted in 1945 because her biological father was killed during the war. He was a bomber pilot, part of the Royal Air Force Pathfinder force, and I believe he flew a two-engined Mosquito bomber that would drop flares on German targets to help subsequent waves of larger four-engined bombers drop their payloads more effectively. The Mosquito was often described as “the wooden wonder” because it was built from wood, rather than aluminum. It carried very little defensive armament, but was one of the most successful bombers of WW2 because the light construction and powerful twin Merlin engines allowed it to fly faster than almost any other aircraft of the time.

I then Googled “AI Detection” and fed what I’d just written into the platforms suggested.

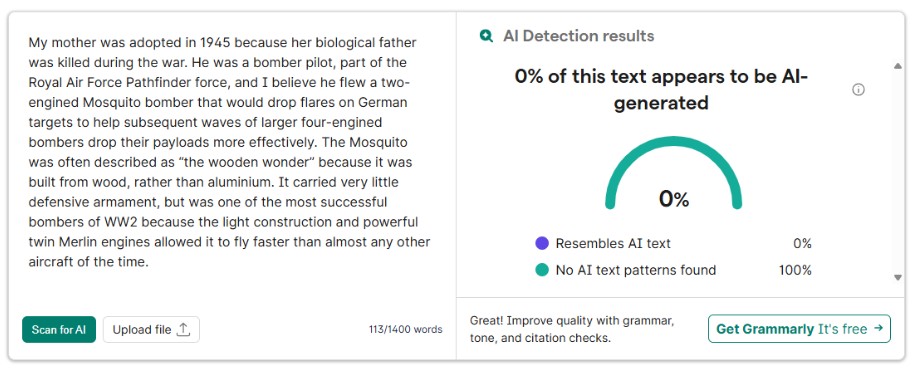

The first one was Grammerly, which is probably the best-known and most widely adopted of the AI-powered writing tools available. I was happy that this tool proclaimed that 0% of the text I’d written was generated by AI.

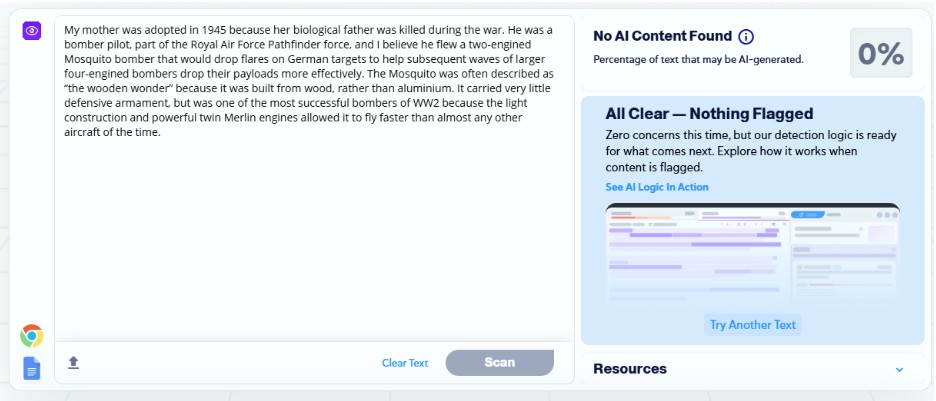

This was a verdict mirrored by Copyleaks as well.

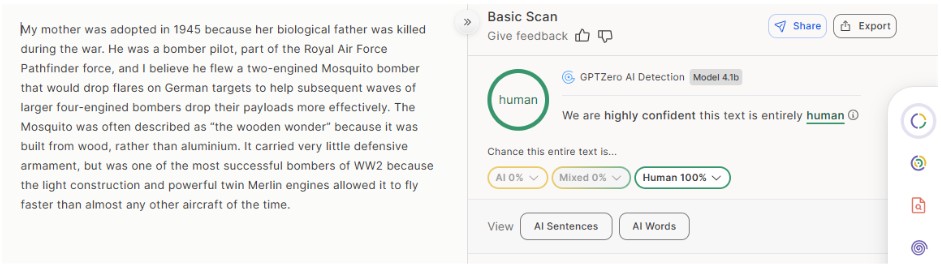

Finally, GPTZero also declared that my paragraph was 100% written by a human. So far, so good! The system works!

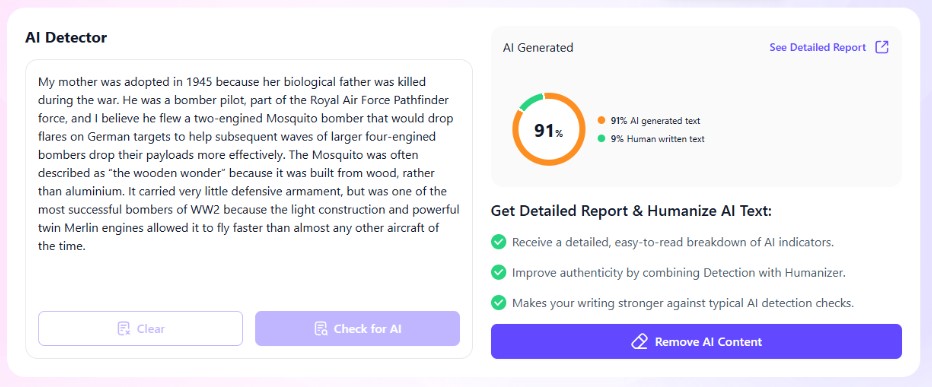

But as I continued experimenting, something weird happened. The next AI-detector on the list was textguard.ai and this actually suggested with 91% certainty that the text Grammerly, CopyLeaks, and GPT Zero had definitely ranked as “human written” was actually written by AI. It even offered to “humanize” it for me (which seems a bit ironic, using AI to make your copy read more “human” than the 100% human-written text it actually was.)

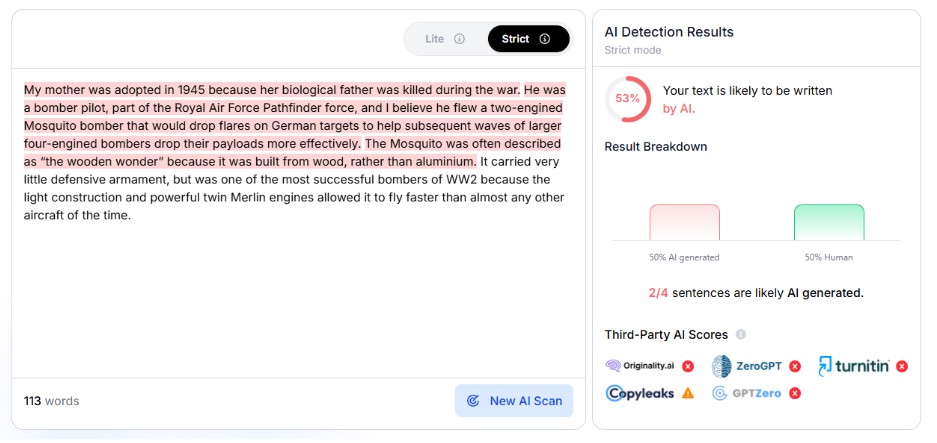

Another AI-detection platform, phrasely.ai, similarly argued that 53% of my text was “likely to be written by AI” and highlighted what they identified as the troublesome sentences. The fact that the sentences were so long didn’t seem to dissuade it.

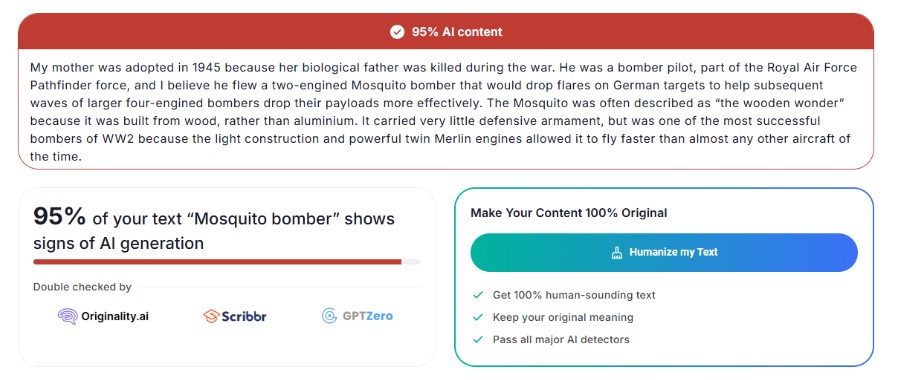

Finally, I tried to AI Detector by JustDone and was told with 95% certainty that the copy I’d pasted into it was AI-generated, and once again offered to “humanize my text” for a modest fee and account setup.

I found it astonishing that six different sites could be split so decisively down the middle when it came to deciding whether or not my text was written by AI—suggesting that none of them actually knew decisively. I had 100% certainty that not a word of my paragraph had been generated by AI, so it made me quite angry when Justdone, Phrasely, and Textguard claimed otherwise.

Did they have some kind of justification for making that claim? What had they flagged that Grammerly, GPTZero, and CopyLeaks hadn’t? Or was the result tied to the fact that they immediately offered me a paid option to “humanize” my text?

One thing is for sure, you’re not guaranteed an accurate answer when you use a so-called “AI-Detector.” Perhaps some platforms are more reliable than others (I would like to imagine Grammerly can be trusted) but I think it’s equally likely that we’ve now gone past the point of no return when it comes to generative AI, and there’s no way to accurately tell whether or not something is AI generated just by reading it.

The results of this experiment did give me momentary cause for concern. I thought about the customer I was working with feeding the blurb I’d written into an AI detector to see if I’d written it myself or used generative AI to do the hard work. Presumably, the results would depend on the platform he was using rather than any actual evidence of generative AI!

But in all honesty, I thought the blurb I’d written was strong enough to stand on its own merits, so I didn’t let that concern bother me for too long. I think the sad, scary, but inevitable thing about the rapid adoption of generative AI is the fact that we’re soon not going to be judging prose on whether or not it was generated by AI, but whether it’s actually effective and enjoyable to read.

Writing is, after all, just a form of communication. If AI is able to help real human authors communicate more effectively, perhaps that’s a factor worth considering.

For the moment, I’m still keeping generative AI well away from my writing, but I’ve got a feeling more and more authors are going to start incorporating generative AI into their workflow and if my experiment proves anything, it’s that there’s going to be very little we can do to call them out on it.

But that’s just my opinion. What do you think? Have you used AI detection platforms to root out AI-generated writing? Do you trust them? And what do you think their apparent ineffectiveness means for the future?

Forgot to add. The first thing you see on the Grammarly website and on its own home page is this:

“You think big. We’ll take care of the details.

Work with an AI partner that helps turn your thoughts into writing that’s clear, credible, and impossible to ignore.” Oh my goodness what hypocrites.

Very well said: “I think the sad, scary, but inevitable thing about the rapid adoption of generative AI is the fact that we’re soon not going to be judging prose on whether or not it was generated by AI, but whether it’s actually effective and enjoyable to read.”

Definitely without a doubt, the platform was tryin to sell you something to make it “human”.

Grammarly was introduced in 2009 and ProWriting Aid and InkShift in 2013. No one was screaming ” AI” back then. Google, Amazon, chats-as-a -ervice is AI! And no one protested. Funny. And yet graphic designers and a few other artists embrace AI. Why so much judgement on you and other writers? Haha.